Why we Replaced Over 100,000 PostgreSQL Tables with Apache Iceberg

How we moved our entire storage layer from PostgreSQL to Apache Iceberg

Databox pulls data from over a hundred integrations like Google Analytics, HubSpot, Stripe, and Shopify. Behind every connected data source is a replication: a pipeline that continuously syncs external data into our platform. Each replication creates roughly ten PostgreSQL tables for the entities it tracks.

Multiply that by thousands of active replications, and we had more than 100,000 tables across our PostgreSQL clusters. A few terabytes of data. And we were months away from running out of disk.

And the data kept growing. Over 400% year over year, with over a hundred new replications showing up every week. We’d already hit the wall once. An emergency cleanup took 16 hours of manual work just to buy ourselves some breathing room. It worked. Temporarily.

We knew how to scale PostgreSQL, both vertically (bigger instances, higher bills) and horizontally (more clusters, more operational overhead). We’d done both. Neither solved the underlying problem: infrastructure costs growing faster than the business, and every new cluster multiplying the surface area we had to manage.

We researched and tested alternatives. Managed solutions that promised horizontal scalability out of the box. Each one came with its own trade-offs: vendor lock-in, missing features, or operational complexity that just shifted the problem somewhere else.

This wasn’t a tech debt problem. It was a capacity crisis with a deadline.

What We Were Running

Our storage layer was a multi-tenant PostgreSQL setup. One schema per replication. Inside each schema, we keep a pair of tables for every entity: a raw table holding the ingested data as JSONB, and a final table with a structured schema derived from the entity’s properties.

This design gave us clean isolation. Each replication lived in its own namespace. No shared tables, no cross-tenant queries. Every read and every write targeted a single replication. Always.

It also gave us flexibility. JSONB in the raw tables meant we could ingest any shape of data without upfront schema definitions. The final tables handled the structure. This worked well when we had hundreds of replications. At thousands, the cost of that flexibility became clear.

Tens of thousands of tables across the clusters. Each one with its own indexes, its own vacuum schedules, its own storage footprint. PostgreSQL doesn’t care if a table has ten rows or ten million. It still tracks it, maintains it, and plans queries against it. Multiply that overhead by more than 100,000 and the operational weight becomes the product’s defining constraint.

The architecture served us well. Until the data outgrew it.

What Is Apache Iceberg

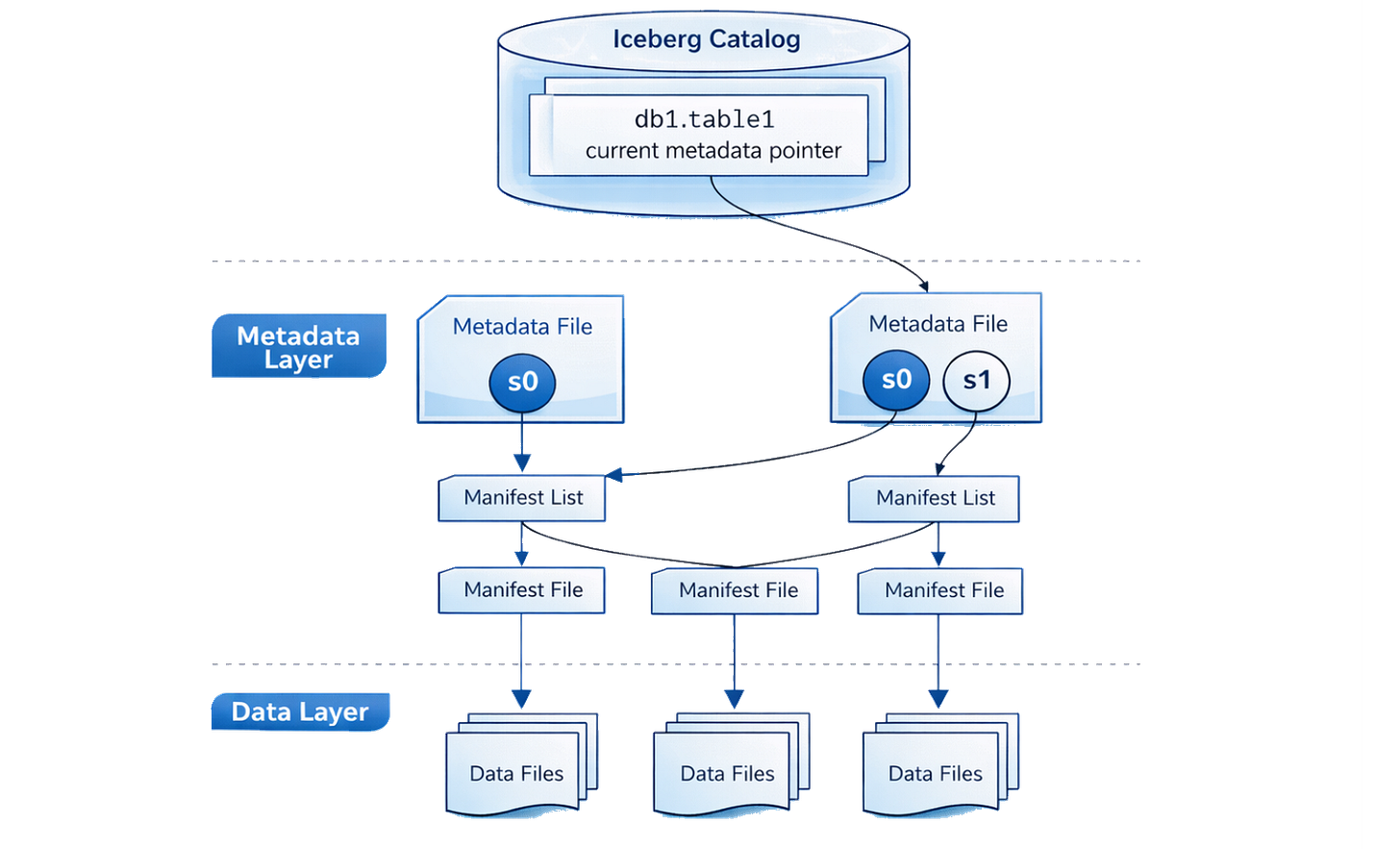

Apache Iceberg is an open table format for large analytic datasets. It’s not a database. It’s not a query engine. It’s a layer that sits between your storage (typically object storage like S3) and whatever compute engine you use to read or write data.

The architecture has three parts. Data files, usually Parquet, hold the actual rows. A metadata layer (manifest files and snapshots) tracks which files belong to which table and in what state. And a catalog keeps a pointer to the current snapshot, so any engine knows where to start reading.

ACID transactions on object storage. Writes produce new snapshots atomically, so readers never see partial data.

Schema evolution without rewriting files. Add a column, rename a field, and existing data stays untouched.

Hidden partitioning that optimizes query performance without exposing partition schemes to users.

Time travel through snapshot history, letting you roll back, audit, or debug by pointing at an earlier state.

Engine-agnostic. Because Iceberg is a format, not an engine, any compatible tool can read or write the same tables: Trino, Spark, DuckDB, Flink, and others.

For a multi-tenant SaaS platform like ours, two properties mattered most. Storage costs scale with actual data, not provisioned capacity. And compute scales independently. You add query power without touching storage, and vice versa.

That decoupling changed the economics entirely.

Why Iceberg

We didn’t start with Iceberg. We started with a list of options and a set of constraints.

The simplest path was scaling PostgreSQL: more clusters, bigger instances, managed sharding. We knew how to do it. But we’d already seen where that road leads: every new cluster adds operational overhead, and costs scale linearly with data. At our growth rate, that math doesn’t work.

We evaluated several alternatives. ClickHouse had excellent analytical performance, but our analytics layer was already built on DuckDB. We didn’t need another query engine. We needed better storage. AWS S3 Tables offered the lowest operational overhead, but capped at 10,000 tables per bucket and 100,000 per account per region. With tens of thousands of tables already, we’d hit those limits on day one. Plus deep vendor lock-in and a limited ecosystem. DuckLake looked promising for analytics, but too early for production multi-tenant workloads.

We spent serious time with Nessie, an Iceberg catalog with git-like branching. But its branching model prevents automatic cleanup of data files. Orphaned Parquet files accumulate in S3, and in the open-source version, the only cleanup path is a separate Java CLI tool you have to run and maintain yourself.

Each option solved part of the problem. None solved all of it without introducing a trade-off we weren’t willing to accept.

What kept pulling us back to Iceberg was the fit with our actual data patterns. Our workloads are append-heavy: replications continuously sync new data, rarely update existing rows. Reads are single-tenant. Every query targets one replication, never joins across them. And the data is semi-structured, which maps naturally to Parquet’s columnar format.

Append-heavy writes. Isolated reads. Columnar storage. That’s exactly what Iceberg was built for.

The question wasn’t whether to use Iceberg. It was which stack to build around it.

The Architecture We Chose

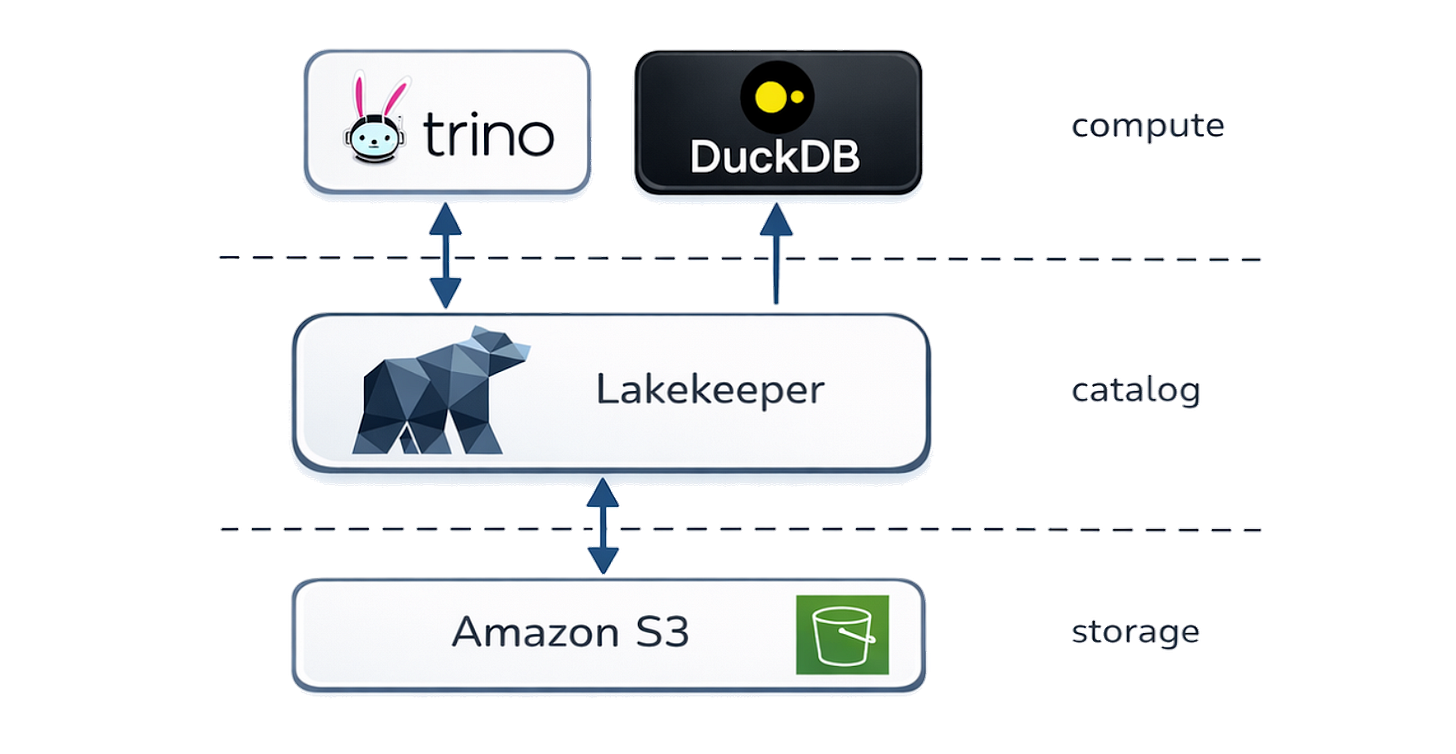

We landed on four components. S3 for storage. Lakekeeper as the Iceberg catalog. Trino for writes. DuckDB for reads.

S3 is the foundation. Parquet files land here and never move. Storage costs scale with actual data, not provisioned capacity or instance sizing. When a replication grows, we pay for the bytes. Nothing else.

Lakekeeper manages the catalog, the metadata layer that tracks which files belong to which tables and in what state. We chose it over Nessie for one reason: when you drop a table or namespace, Lakekeeper handles cleanup of the underlying S3 files through an automated cleanup pipeline. No orphaned data. No separate garbage collection tooling. Predictable storage costs by default.

Trino handles writes to Iceberg. While primarily known as a query engine, Trino supports full INSERT, UPDATE, DELETE, and MERGE operations on Iceberg tables. A coordinator node distributes work across a pool of workers that scales independently of storage. When volume spikes, we add workers. When it calms down, we scale back. The data stays where it is.

DuckDB handles all reads. This was already our analytics engine, so the integration was natural. DuckDB reads Parquet files directly from S3, with no need to route analytical queries through Trino. This cut our query volume against the compute layer by over 95%.

The isolation model carried over from PostgreSQL: one namespace per replication. Each replication gets its own Iceberg namespace with its own tables. Lookups are constant-time regardless of how many replications exist. No shared state, no cross-tenant contention. The same isolation we had before, without the operational weight of tens of thousands of PostgreSQL tables.

The Migration

We weren’t going to flip a switch. With thousands of active replications syncing data continuously, any migration had to be zero-downtime and reversible at every step.

We broke it into five phases.

Dual Writes. Every replication writes to both PostgreSQL and Iceberg simultaneously. We started with ten replications, watched for issues, then ramped to a hundred, then five hundred, then all of them. PostgreSQL remained the source of truth throughout.

Backfill. Historical data had to move. We ran batch migrations with multiple concurrent streams, working through the backlog replication by replication. No big bang. No downtime windows.

Validation. Row counts, sample data comparisons, schema verification, all per-replication. Every replication had to pass before we moved forward. We didn’t trust aggregates. We validated individually.

Switch Reads. Gradually shifted read traffic from PostgreSQL to Iceberg, one integration at a time. This also meant switching the source of truth. Iceberg was now the authoritative store. Latency and error rates were monitored at each step. If anything looked off, we rolled back that integration and investigated.

Cutover. Disabled dual writes. PostgreSQL went read-only as a safety net for a few weeks. Then we decommissioned it.

The whole migration took a small team a few weeks. The guiding principle was simple: every phase has a rollback. We never burned the bridge behind us.

The Numbers

The economics shifted immediately.

Infrastructure costs dropped by roughly two-thirds on day one. At our projected growth (tens of terabytes within three years), the savings widen further. What would have been a nearly 5x increase in PostgreSQL costs becomes a fraction of that on Iceberg. Over three years, we’re looking at over 80% in total cost savings.

The operational side changed just as much. PostgreSQL at scale demanded constant attention: cluster management, vacuum tuning, capacity planning, backup validation. That overhead grew with every new cluster. With Iceberg, the operational footprint shrank to a quarter of what it was. S3 doesn’t need vacuuming. Lakekeeper doesn’t need capacity planning. Trino workers scale without manual intervention.

The storage footprint shrank too. Parquet’s columnar format compresses data far more efficiently than PostgreSQL’s row-based storage, especially for semi-structured data that was previously stored as JSONB, where key names are repeated in every row. In Parquet, they exist once as column metadata. For our workloads, we’re seeing roughly 5-10x reduction in raw storage size.

Storage now scales sub-linearly with data growth. Object storage pricing is a function of bytes stored and requests made, not instance types, provisioned IOPS, or reserved capacity. The more we grow, the wider the gap between what we’d have paid and what we actually pay.

Read performance actually improved a bit, 33% faster than PostgreSQL at the 95th percentile.

The write side is a different story. Writes to Iceberg are roughly 200x slower than PostgreSQL. About 30 seconds versus 140 milliseconds at the 95th percentile. In isolation, those numbers look dramatic. In the context of an entire sync (which includes API calls, data extraction, deduplication, and loading) the write step is still a small portion of the total cycle time. Replications sync in the background. Users never wait on a write.

Trade-offs

We’d be lying if we said this was strictly better in every dimension.

PostgreSQL is one thing. You deploy it, you query it, you back it up. Our new stack is four things (S3, Lakekeeper, Trino, DuckDB), each with its own failure modes, its own configuration surface, its own upgrade cycle. The operational complexity didn’t disappear. It changed shape.

Lakekeeper is a younger project than Nessie. Smaller community, fewer production references, less battle-tested documentation. We accepted that trade-off because automatic storage cleanup was non-negotiable for us. But it means we’re closer to the frontier than we’d normally prefer.

DuckDB is exceptional for analytical reads. It’s also still maturing as a production component in multi-tenant architectures. We monitor it closely.

Trino cluster management isn’t zero-ops. Workers need right-sizing, the coordinator needs monitoring, and query planning requires attention as workloads evolve. It’s lighter than managing multiple PostgreSQL clusters, but it’s not nothing.

None of these are reasons not to make the move. They’re reasons to go in with clear expectations.

What Comes Next

This migration isn’t an isolated infrastructure decision. It’s part of how we’re rethinking the Databox platform from the ground up.

PostgreSQL was the right choice when we started. Iceberg is the right choice for what we’ve become. Separation of storage and compute isn’t just a cost optimization. It’s an architecture that compounds. Every new replication is cheaper to store, cheaper to query, and easier to manage than the one before it. The marginal cost of growth goes down, not up.

If your database is groaning under analytical workloads it was never designed for, look at what happens when you stop scaling the database and start rethinking the storage.